This week we received two brand new devices, namely two shiny Samsung SUR40 multi-touch interactive surfaces (i.e., Microsoft’s new Surface, or Surface 2). In my opinion, their main advantage is not size or display resolution (there are bigger surfaces out there), but lies in their sensing technology PixelSense. In a nutshell, every pixel also acts as photo sensor. Therefore, you literally see more than simple contact points. It’s like a flat camera: Objects close to the surface are clearly visible and can be identified. Of course, this is black-and-white only (PixelSense is based on infrared) and the further an object is away from the surface the more blurry it gets. Still, it is sufficient to see contours and detect visual tags. And all that integrated with a flat full HD LCD screen.

Unpacking and assembling (attaching the legs) is straightforward. The ASCII-based boot process could be considered a bit ugly, though, and does not seem to fit here. Windows 7 is pre-installed and can be operated via touch and onscreen keyboard out-of-the-box. So far, so good. There are, however, a couple of issues which shouldn’t be there given the price tag.

Calibration

First, there is an icon prominently placed on the desktop which reads “SUR40 Calibration Tool”. Since it’s there, it must be there for a purpose. Or so you would think… After starting the calibration tool it asks to connect a USB keyboard (good bye touch input). Then it demands is a calibration board. Wait, did I miss something? No, there is no such board included. Luckily, I canceled at this point. Others who continued apparently killed the touch input and turned the surface into an expensive monitor.

[important]A recent post by James Mali at Microsoft’s Surface blog recommends “to leave it [the calibration tool] alone unless you already have your calibration board and you really need to recalibrate your unit”. Supposedly, re-calibration is not required for at least 1,000 hr and customers can expect to receive their calibration boards over the next days.[/important]

Finger Touch Input

Another issue is how Windows treats touch input. The underlying vision engine can identify finger touches and ignore others (e.g., touches caused by the arm hovering above the surface). But Windows seems to ignore this information. Therefore, there is a lot of unintended touch input generated. Using general Windows applications requires a bit of patience as the cursor jumps frequently. I hope there is a setting somewhere to change this and filter the input; I haven’t found it yet, though.

[important]There are some related questions on Microsoft’s Developer Network forums (post 1, post 2) – but the only solution seems to consist of implementing custom applications that explicitly check for finger touch input.[/important]

Raw Images

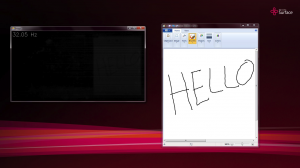

Here are some screenshots of what the surface sees for different input (raw image: 1,920 x 1,040px):

Apparently, PixelSense also sees parts of the screen output (notice the Paint window’s shadow in the raw image – click to zoom in). Raw image shown using EmguCV.

Hey !, sorry, My english is not good.

I need something like this for a bar application. i was reading a lot of information about multitouch tables. I can´t find something like this, without cameras. Do yo think that is possibly construct an own touch table with this technology?.

Thanks

Hi, doing something like PixelSense on your own is difficult. If you just want something flat, have you looked in laser light planes (LLP) or even touch overlays? Of course, this would give you contact points only. Dominik